LookOut! Interactive Camera Gimbal Controller for Filming Long Takes

Transactions on Graphics 2022, presented at SIGGRAPH 2022

|

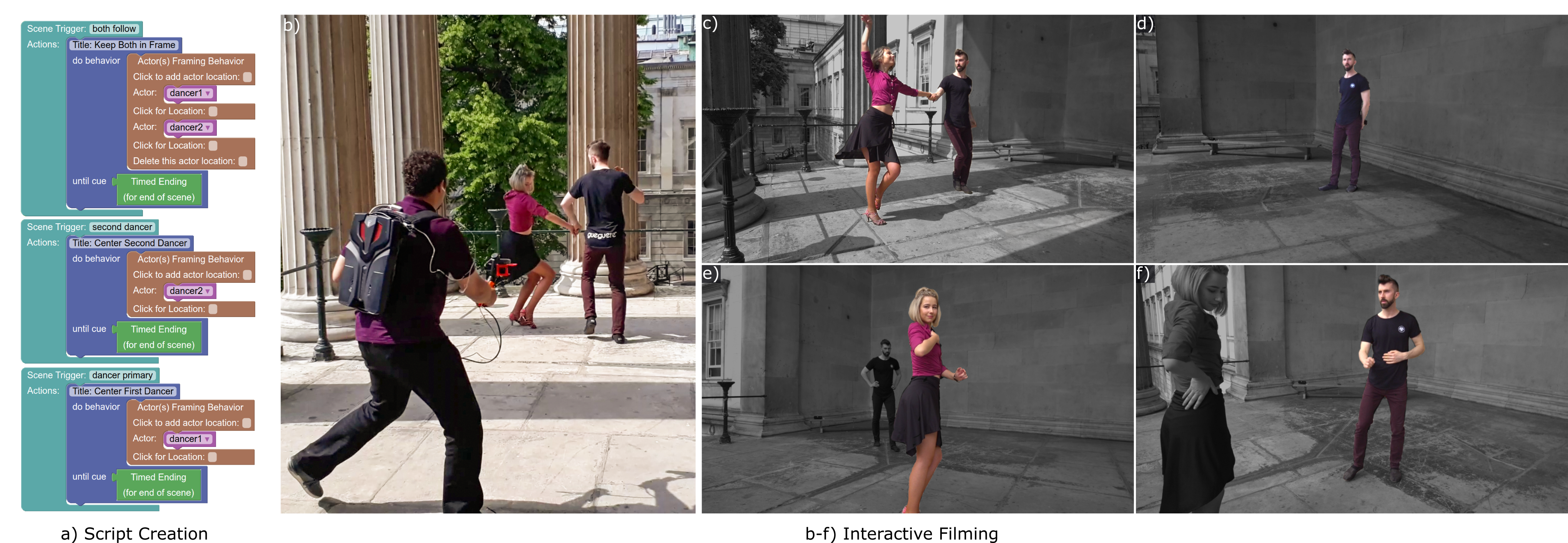

| LookOut can take over the task of controlling where the camera is pointing when a camera operator is overwhelmed with other duties on the go, dynamically changing the camera's behavior based on where actors are, how a scene progresses, and what the camera operator instructs it to do. b) The user-worn LookOut rig consists of a light backpack computer, a hand-held motorized gimbal, dual cameras (normal and wide-view), earphones, a lapel microphone, and a joystick for initial setup. Before filming, the LookOut GUI a) enables a user to pre-script where the camera should point and its focal length. This involves creating camera behavior blocks that can be chained together to make scripts, callable during filming. A behavior can be as simple as a pan, or as complex as positioning multiple subjects in different parts of the frame, and they can be sequenced with scene specific cues. On boot, LookOut guides the operator, through text-to-speech, to enroll actor identities to its visual tracker, perform scene-specific initialization, and calibrate audio. c-f) Four frames from a LookOut-captured video, but with false-coloring to visualize which actor(s) LookOut is dynamically framing via its motorized gimbal to satisfy the operator's currently selected script. At the user's instruction, LookOut frames (c) both dancers, then (d) orients the gimbal to center on the male, then the female (e), and back to the male (f). The user receives audio feedback when switching between camera behaviors. Without a field monitor, the user can watch where they're going, while trusting our controller to handle their dynamic requests. |

Abstract

The job of a camera operator is challenging, and potentially dangerous, when filming long moving camera shots. Broadly, the operator must keep the actors in-frame while safely navigating around obstacles, and while fulfilling an artistic vision. We propose a unified hardware and software system that distributes some of the camera operator's burden, freeing them up to focus on safety and aesthetics during a take. Our real-time system provides a solo operator with end-to-end control, so they can balance on-set responsiveness to action \vs planned storyboards and framing, while looking where they're going. By default, we film without a field monitor.

Our LookOut system is built around a lightweight commodity camera gimbal mechanism, with heavy modifications to the controller, which would normally just provide active stabilization. Our control algorithm reacts to speech commands, video, and a pre-made script. Specifically, our automatic monitoring of the live video feed saves the operator from distractions. In pre-production, an artist uses our GUI to design a sequence of high-level camera "behaviors.'' Those can be specific, based on a storyboard, or looser objectives, such as "frame both actors.'' Then during filming, a machine-readable script, exported from the GUI, ties together with the sensor readings to drive the gimbal. To validate our algorithm, we compared tracking strategies, interfaces, and hardware protocols, and collected impressions from a) film-makers who used all aspects of our system, and b) film-makers who watched footage filmed using LookOut.

30s Fast Forward

Main Video

Validation Video

BibTeX

@article{sayed2022lookout,

title = {LookOut! Interactive Camera Gimbal Controller for Filming Long Takes},

author = {Sayed, Mohamed and Cinca, Robert and Costanza, Enrico and Brostow, Gabriel},

year = 2022,

month = {mar},

journal = {ACM Trans. Graph.},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

volume = 41,

number = 3,

doi = {10.1145/3506693},

issn = {0730-0301},

url = {https://doi.org/10.1145/3506693},

issue_date = {June 2022},

articleno = 30,

numpages = 16,

keywords = {video editing, Cinematography, camera gimbal, videography}

}