Unsupervised Monocular Depth Estimation

with Left-Right Consistency

and

University College London

Learning based methods have shown very promising results for the task of depth estimation in single images. However, most existing approaches treat depth prediction as a supervised regression problem and as a result, require vast quantities of corresponding ground truth depth data for training. Just recording quality depth data in a range of environments is a challenging problem. In this paper, we innovate beyond existing approaches, replacing the use of explicit depth data during training with easier-to-obtain binocular stereo footage.

We propose a novel training objective that enables our convolutional neural network to learn to perform single image depth estimation, despite the absence of ground truth depth data. By exploiting epipolar geometry constraints, we generate disparity images by training our networks with an image reconstruction loss. We show that solving for image reconstruction alone results in poor quality depth images. To overcome this problem, we propose a novel training loss that enforces consistency between the disparities produced relative to both the left and right images, leading to improved performance and robustness compared to existing approaches. Our method produces state of the art results for monocular depth estimation on the KITTI driving dataset, even outperforming supervised methods that have been trained with ground truth depth.

BibTeX

@inproceedings{monodepth17,

title = {Unsupervised Monocular Depth Estimation with Left-Right Consistency},

author = {Cl{\'{e}}ment Godard and

Oisin {Mac Aodha} and

Gabriel J. Brostow},

booktitle = {CVPR},

year = {2017}

}

Acknowledgements

We would like to thank David Eigen, Ravi Garg, Iro Laina and Fayao Liu for providing data and code to recreate the baseline algorithms. We also thank Stephan Garbin for his invaluable help throughout this project and Peter Hedman for his LaTeX magic. We are grateful for EPSRC funding for the EngD Centre EP/G037159/1, and for projects EP/K015664/1 and EP/K023578/1.

pdf

pdf

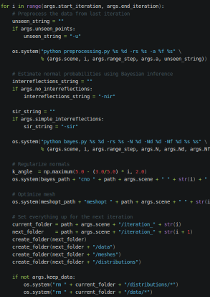

code

code

models

models

Talk

Talk