Scalable Inside-Out Image-Based Rendering | Datasets

New!

The test paths and results images from the project video are now available for download! The output images were generated using the publicly available datasets above in the following scenes: Creepy Attic, Dorm Room, Museum and Reading Corner. These paths are stored using the Bundler file format and can be opened with new versions of MeshLab.

Download them here (607 MB).

README

===========================================================================

==== Scalable Inside-Out Image-Based Rendering - Dataset Release ====

==== Peter Hedman, Tobias Ritschel, George Drettakis, Gabriel Brostow ====

==== SIGGRAPH Asia 2016 ====

===========================================================================

Structure-from-Motion is ambiguous with respect to orientation and scale. This means

that our SfM reconstructions do not have consitent scales and up-vectors. We automatically

compute scale from the (metric) RGB-D images from the depth scanner. For easier navigation,

we manually estimated the up vector forward vector in the scene.

While we store our depth maps as 16-bit PNGs with a millimeter scale, everything else

(all the meshes and the camera poses) live in the (arbitrary) coordinate space produced

by the SfM tool.

Use the following code to convert load a depth map with the scale of the SfM reconstruction:

// mm_to_sfm can be found in parameters.txt

cv::Mat load_depth_map(const std::string& path, float mm_to_sfm) {

static const float MAX_16BIT_VALUE = 65535.f;

cv::Mat input = cv::imread(path);

cv::Mat output;

input.convertTo(output, CV_32F, mm_to_sfm / MAX_16BIT_VALUE);

return output;

}

==========================================

== Scene parameters (in parameters.txt) ==

==========================================

estimated_up : Aproximate up vector expressed in SfM coordinates.

estimated_forward : Approximate forward vector expressed in SfM coordinates.

mm_to_sfm : Scaling factor which converts from a millimeter scale to the scale

Note that the up and forward vectors are not necessary. However, they are convenient

if you want the horizon to be correct when you're navigating the scene.

================

== Input data ==

================

Input_Data\Camera_Images_High_Res\*.jpg : Our input images after pre-processing

(cropped, denoised, and white-balanced).

Input_Data\RGBD_Scan.oni : The complete RGB-D scan, stored in the

OpenNI file format.

Input_Data\RGBD_Scan_As_Images\color-*.jpg : Color images extracted the RGB-D scan.

Input_Data\RGBD_Scan_As_Images\depth-*.png : Depth images extracted the RGB-D scan,

stored in millimeters as 16-bit PNGs.

Note that the color and depth videos from the RGB-D sensor are *NOT SYNCED*. To overcome

this, we only extracted color images that have a corresponding depth frame (within 60 ms).

========================

== SfM reconstruction ==

========================

We store camera poses for both the RGB-D images and the digital camera images in the NVM

file format, which can be read by VisualSFM (http://ccwu.me/vsfm/).

SfM\Input\Camera_Images_Low_Res\*.jpg : Input images downscaled by 4x,

to conserve memory and speed up

reconstruction.

SfM\Input\RGBD_Scan_As_Images_Subsampled\color-*.jpg

SfM\Input\RGBD_Scan_As_Images_Subsampled\depth-*.png : We subsample the RGB-D scan to

fewer than 500 images. This also

speeds up reconstruction.

SfM\Output\camera_poses.nvm : The camera poses for both the RGB-D images and

the digital camera images. Stored as an NVM file.

SfM\Output\images\color-*.jpg : The color components of the RGB-D images,

after after correcting for radial distortion.

SfM\Output\images\*.jpg : The digital camera images, after correcting for

radial distortion.

===========================

== Global reconstruction ==

===========================

Global_Reconstruction\Fused_Point_Cloud_From_RGBD_Images.ply : All the RGB-D images fused

into a point cloud with

colors and normals. Stored

as PLY files (binary).

Global_Reconstruction\Fused_Mesh_From_RGBD_Images.ply : A dense mesh reconstructed

from the point cloud. Stored

as PLY files (binary).

Note that both of these reconstructions use the same coordinate space as the

SfM reconstruction above. We use Screened Poisson Surface Reconstruction

(http://www.cs.jhu.edu/~misha/Code/PoissonRecon/Version5/)

to convert the point cloud into a dense mesh.

Both reconstructions can be loaded with e.g. MeshLab (http://www.meshlab.net/).

=============================

== Per-view reconstruction ==

=============================

Per_View_Reconstruction\Depth_Maps_For_Camera_Images\*.png : Our refined depth maps for

the digital camera images.

Stored in millimeters as

16-bit PNGs.

Per_View_Reconstruction\Meshes_For_Camera_Images\*.ply : Our local meshes for

the digital camera images.

Stored as PLY files (ASCII)

with normals and texture

coordinates.

Note that the meshes live in the SfM coordinate space also used by the global reconstruction.

The PLY meshes can be loaded with e.g. MeshLab (http://www.meshlab.net/).

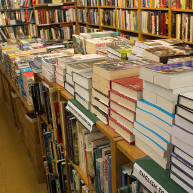

Book Shop

Book Shop Creepy Attic

Creepy Attic Dorm Room

Dorm Room Museum

Museum Play Room

Play Room Reading Corner

Reading Corner